what tasteslop means for tech, AI, and new media

Originally posted on X

Marc Andreessen believes that venture capital may be one of the only jobs to survive AI automation because “the art of picking” boils down to taste. Paul Graham has declared that “in the AI age, taste will become even more important.”

Something is shifting in how the tech industry talks about taste, and the artifacts are becoming uncanny: an AI-generated moodboard laden with TASCHEN books and a Chemex; an apartment staged with an Eames lounge chair in the foreground of a Vitsœ shelving system.

But when “taste” is deployed en masse, what is actually being bought and sold?

Emily Segal has named the most visible symptom of this phenomenon as tasteslop: what occurs when the visible signals of taste are extracted from the contexts that produced them and then are redeployed—”cultural capital after extraction, after it’s been through the blender.”

Segal draws on French sociologist Pierre Bourdieu’s Distinction (1979), which argues that taste is not spontaneous or natural, but systematically produced by class position, and that two dominant forms of capital that define these positions are economic capital and cultural capital. His later essay “The Forms of Capital” (1986) asks an even sharper question: Can economic capital transform into cultural capital?

Bourdieu’s answer is yes, but only with losses inherent to the process of conversion. Economic capital cannot transform directly into cultural capital, but it can purchase the conditions under which cultural capital might be accumulated through subsequent embodied labor.

The framework I often find myself returning to is that different forms of capital have different liquidity profiles. Economic capital is liquid and freely exchangeable, while cultural capital is illiquid by design; it actually degrades when its conversion becomes too visible. According to Bourdieu, cultural capital only functions as capital through its disguise, what Bourdieu calls “misrecognition.” Successful conversion meant the slow accumulation of cultural capital through investment of economic capital over time. The artifacts of cultural capital were downstream of the accumulation that produced them. This is the structural property that allows cultural capital to function as capital at all—that it is instead seen as natural taste, discernment, or legitimate competence, rather than a product of unequal power.

If the illiquidity of cultural capital is its greatest signifier of value, another question emerges: What happens to cultural capital when the cost of production races to zero?

The cost of signals of taste is a debate well-trodden throughout the history of technology—printed reproduction, photography, social media—but comes to a head in the age of AI.

Generative tools produce moodboards, brand identities, and the former artifacts of belabored reference-culling at near-zero cost and time. AI is able to identify and index organic cultural capital formations almost as quickly as they appear. The cost of producing signal collapses, while the rate at which authentic cultural capital can be produced accelerates in parallel. When the visible signals of cultural capital are acquired without the friction and misrecognition that allow them to function as capital, what is produced is not cultural capital at all. It is something else—visible, expensive, often technically accomplished, but structurally inert.

Can the System Itself Have Taste?

Cultural capital, on Bourdieu’s account, requires three conditions that AI’s mode of operation does not satisfy.

Embodiment: Bourdieu insists that the most durable cultural capital is produced by embodied life rather than by access to information. You can pay for piano lessons. You cannot pay for the cultivated ear. AI has access to the entire indexed corpus of cultural production, but not the embodied accumulation—the capacity to detect, through bodily disposition rather than pattern matching—that produces taste.

Misrecognition: Cultural capital functions as capital only when its function as capital is disguised. An LLM’s mode of operation is explicitly indexation and recombination; there is no disguise possible. The capital cannot be misrecognized when its conditions of production are advertised.

Time: The conversion work of embodied accumulation that Bourdieu describes takes lifetimes. AI’s mode of operation is its precise opposite: speed. Even if the system could somehow develop something like embodied judgment, it could not do so under the conditions Bourdieu identifies as necessary, because those conditions are themselves slow.

Bourdieu’s description of embodiment rests on a deeper philosophical one. In The Tacit Dimension (1966), Michael Polanyi argued that all knowledge has a dimension that cannot be made fully explicit: practiced judgment, the trained eye, transmitted through embodied apprenticeship rather than instruction. Through Hubert Dreyfus and his book What Computers Can’t Do: A Critique of Artificial Reason (1972), this becomes the strongest claim against AI’s capacity for skilled judgment: what cannot be made explicit cannot be encoded. AI has access to what has been made explicit. The tacit dimension is absent not because it has been overlooked, but because it cannot, in principle, be there.

A Bourdieusian Precedent: Zombie Formalism

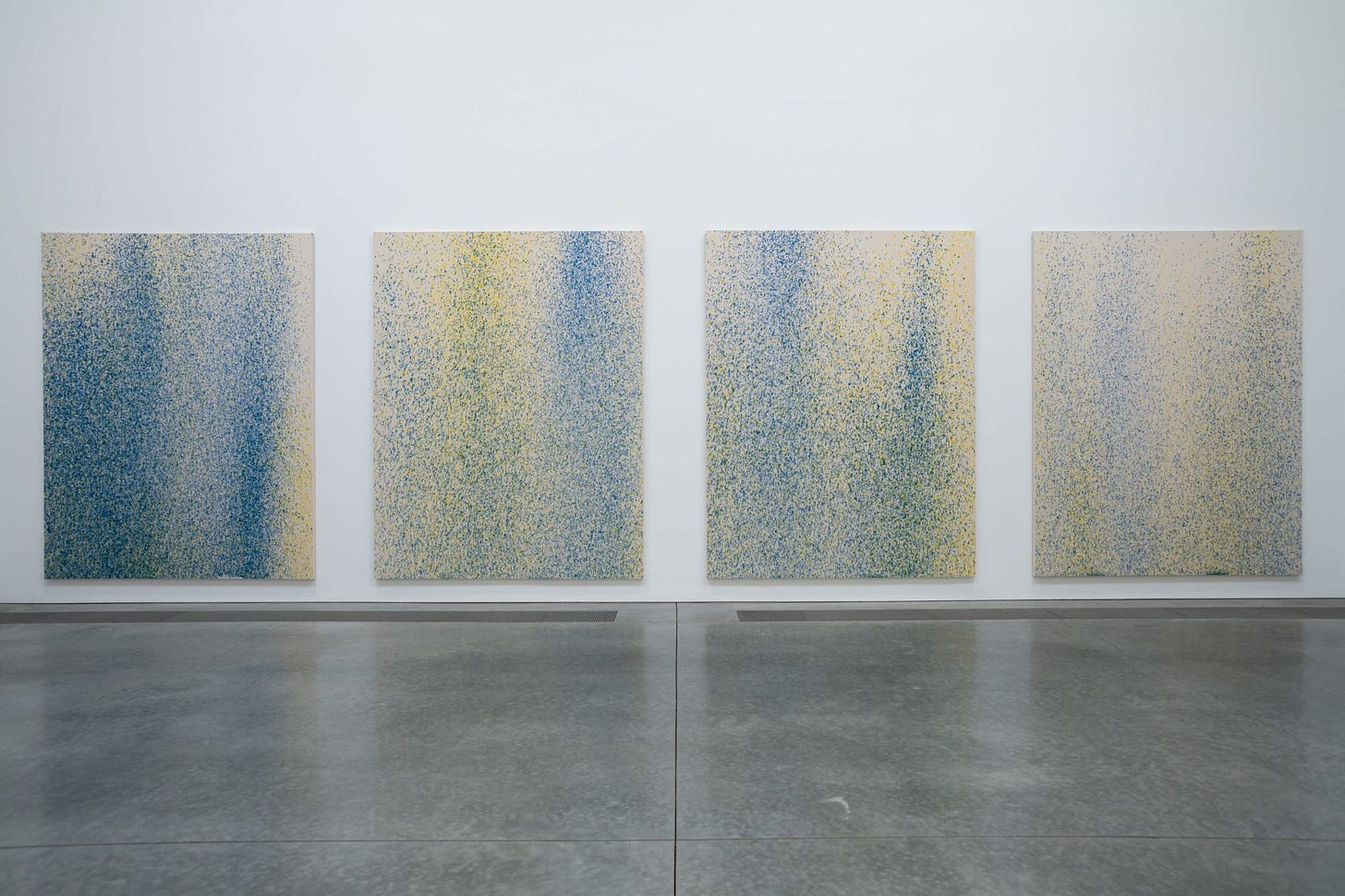

The clearest recent precedent for tasteslop is Zombie Formalism, an art trend from the 2010s. You’ve absolutely encountered Zombie Formalist works in the wild—large-scale abstract paintings—gestural, muted, industrial in finish. Works that photographed cleanly for the new Instagram age or were compatible with compressed digital displays optimized for online circulation. Zombie Formalism thrived within legible market dynamics. Visibility reinforced demand; demand reinforced visibility. Value accrued through circulation rather than through the slow consolidation of meaning.

The resulting artifacts carried the visible marks of how it was acquired. The critic Jerry Saltz, writing in New York Magazine in 2014, complained that the work was “modest, conservative, and maddeningly recursive.” Using a Bourdieusian lens, Zombie Formalism was a textbook conversion failure. Economic capital attempted to acquire cultural capital through accelerated market infrastructure that bypassed the conditions of artistic development and community that historically produced stable cultural value. This was the art world registering that the misrecognition had failed.

“New Media” and the Editorial Borrowing

The Zombie Formalism phenomenon was largely isolated to the art world, but we see similar dynamics emerging in tech, an industry that has positioned itself against institutional authority for decades. This antagonism has now turned toward the editorial class, treating journalists as adversaries and the institutions that employ them as illegitimate sources of cultural authority.

And yet across the contemporary tech media landscape…TBPN borrows the visual language of ESPN. Colossus borrows the house style of The New Yorker profiles. There is a construction of an insular media universe with the tech industry as its cultural center, borrowing the aesthetic authority of legacy editorial to project seriousness onto the project. Some efforts succeed more than others. Where a tech-native publication has done substantive editorial work, the borrowed visual register has more to rest on, and the conversion lands closer to genuine cultural production. The general case is otherwise: imitation at the level of signs without the institutional labor that produced their meaning.

The most extreme version is what I have come to think of as the Marina Abramović-ification of podcasts. Sparse staging, ritualized stillness, dramatic lighting, an air of self-serious gravitas. The cinematography is real, the writing is often genuinely good. But the attempts often sit at the same point in the conversion process that AI-generated moodboards do. The guests look uncomfortable. The sets look aspirational rather than authoritative. Each pairing is a Bourdieusian conversion attempt—economic capital purchasing the production values that historically signaled editorial cultural capital.

AI turns what began as a simple contradiction into an impending crisis. The industry is now embedding generative systems into every layer of production—engineering, marketing, design—at the exact moment when it requires editorial legitimacy. The promise of AI to automate creative labor threatens the credibility of any artifact it produces.

Audiences trained by AI-mediated feeds now read certain visual cues as serious—the typography of The New Yorker, the framing of a long-form documentary—which means that former markers of editorial legitimacy have decoupled from the cultural histories that produced them. The legitimacy crisis trains its own resolution. AI floods the feed; the feed trains audiences to read borrowed signals as authoritative; the audience’s confirmation licenses more AI; more AI deepens the crisis. The loop closes.

The AI era of tasteslop is in danger of not producing bad taste, but of producing a generation of audiences unable to discern. AI did not invent this dynamic, but it has industrialized it. AI is the first technology of cultural production that can produce signals without a producer. The system does not occupy a position. It does not inherit a tradition. It does not contest a canon. It generates from no location, with no relation to the material it recombines. This is structurally new, and the consequences for the broader cultural ecosystem are not yet metabolized.

What survives, if anything does, will be the cultural production that retains the conditions taste used to require—and the audiences capable of registering those conditions which are structural and produced under friction rather than at scale. They depend on inheritance, contest, and the slow accumulation of positions held over time. There is a great deal the tech industry could learn from how legacy editorial institutions built authority—through slow editorial judgment, the discipline of being right when others were wrong, the willingness to inhabit institutional forms rather than route around them.

Andreessen and Graham are right that taste will matter more in the AI age. What they miss is that the same technology elevating taste as a moat is dissolving the conditions under which taste can function as one. The pressing danger before us is not that AI will have bad taste, but that taste will become indistinguishable from its most reproducible signals—and that, eventually, fewer people will remember there was ever a difference.